Fabulou$ Fable Arrive$; Agents > Search; AI Farming Sprouts

Today's AI Outlook: ⛅️

Claude’s New Superpower Comes With A Meter

Anthropic launched Claude Fable 5, the public-facing version of its long-teased Mythos-class model, while reserving Claude Mythos 5 for vetted cybersecurity partners. The pitch is simple: the best Claude yet, broader access than before, and enough guardrails to keep the spicy stuff routed elsewhere. The catch is also simple: it costs $10 per million input tokens and $50 per million output tokens, making it the priciest Claude to date. Quite the conundrum.

Why it matters

Fable appears to deliver on the hype, with major gains in coding, reasoning and knowledge work. But the new frontier may be less about capability and more about affordability. A model that can migrate massive codebases or run autonomous science workflows is impressive. A model that racks up millions in testing costs is a procurement meeting with a cape.

The Deets

- Fable 5 is available across Claude subscription tiers until June 22, then moves to separate usage credits.

- Mythos 5 is available to Anthropic’s Project Glasswing partners, with looser cybersecurity restrictions than Fable.

- Fable routes sensitive topics like cybersecurity, biology and chemistry to Opus 4.8.

- Pilot partners reportedly saw testing costs run into the millions of dollars.

- Stripe reportedly used it to migrate a 50-million-line codebase in a day.

Key takeaway

Anthropic may have built the model everyone wants to try, but the pricing makes it feel less like a daily driver and more like a private jet for tokens.

🧩 Jargon Buster - Guardrails: Safety rules that restrict what an AI model can answer, generate or help users do.

⚡ Power Plays

OpenAI’s Valuation Is Too Hot To Hold

SoftBank’s effort to raise at least $6B through a margin loan backed by its OpenAI stake has stalled, according to the reporting in AI Secret. The target had already been cut from $10B, and some lenders balked at the challenge of valuing OpenAI as an unlisted company. That pause came even as OpenAI reportedly filed confidentially for a U.S. IPO and lined up Goldman Sachs and Morgan Stanley for a possible listing as soon as this fall.

Why it matters

OpenAI is valuable enough to move SoftBank’s balance sheet, but lenders still need a number they can defend. AI valuations have been soaring, but credit markets care less about vibes and more about collateral math. That makes OpenAI the rare asset everyone wants exposure to, while still making bankers sweat through their Patagonia vests.

The Deets

- SoftBank had reportedly lined up about $5B before talks stalled.

- The financing was tied to the value of SoftBank’s OpenAI stake.

- OpenAI’s private-market status made valuation harder for potential lenders.

- SoftBank has benefited heavily from OpenAI-driven gains.

- The IPO filing could eventually give markets a clearer price.

Key takeaway

The market loves OpenAI’s upside, but borrowing against it is harder when nobody agrees where the ceiling, floor or actual floor plan is.

🧩 Jargon Buster - Margin Loan: A loan backed by financial assets, where lenders can demand more collateral if the asset value falls.

Agents Put Knowledge Work On The Clock

Perplexity and Harvard Business School studied how AI agents change knowledge work by comparing 10,000 identical queries sent to Perplexity Search and its Computer platform. Search answered fast, averaging 33 seconds, while Computer worked for 26 minutes on average. But Perplexity estimated that the same workflow would take a user 269 minutes with Search versus 36 minutes with Computer.

Why it matters

The interesting part is not only time saved. Users gave the agent harder, more creative and more cross-disciplinary work. When people used the agentic route, they were more likely to ask for documents, code, visuals and work outside their own field. That suggests agents may change ambition, not just efficiency.

The Deets

- Half of Computer tasks involved creating something new, about 2x the rate of Search.

- Work outside a user’s field rose nine points to 59%.

- Computer users asked for more complex outputs across multiple fields.

- Search remained quicker for lookups, but left the “doing” to the user.

- The study framed agents as tools that can shift workflows from retrieval to execution.

Key takeaway

AI agents may make workers faster, but the bigger shift is that they make people ask for bigger things.

🧩 Jargon Buster - Agentic AI: AI that can plan and complete multi-step tasks, rather than just answering one prompt at a time.

🛠️ Tools & Products

Farm Coder Has Entered The Chat

OpenAI profiled Hiroki Tomiyasu, a self-taught farmer in Hokkaido, Japan, who uses ChatGPT and Codex to build automation for a roughly 100-hectare farming operation. His AI stack helps with satellite crop monitoring, plant disease diagnosis, greenhouse vent controls, pesticide records and group-chat bots for farm operations.

Why it matters

This is the clearest version of “AI as an engineering department” for people who do not have one. Instead of waiting for a specialized ag-tech vendor, Tomiyasu is building his own tools for the problems in front of him. The broccoli now has better software support than many offices.

The Deets

- Tomiyasu grows soybeans, green onions, pumpkins and broccoli.

- AI helps analyze satellite imagery and monitor crops.

- Codex helped create greenhouse controls that raise and lower vents by text.

- He built an Airtable hub for farm records, logs and feeds.

- He described AI as an always-available engineer.

Key takeaway

AI’s most useful products may come from people who know the problem deeply and can now build the missing software themselves.

🧩 Jargon Buster - Codex: OpenAI’s coding assistant for writing, editing and automating software tasks.

Late Payments Meet Their Algorithmic Match

Australian startup Earlytrade raised $10M and is applying agentic AI to construction payments. The company helps subcontractors trade a portion of an invoice for faster cash, such as taking $98,000 today instead of waiting two months for $100,000. Its AI agents will learn each subcontractor’s cash flow needs and set discounts in real time.

Why it matters

Construction’s payment delays are ancient, expensive and deeply baked into the power structure of the industry. General contractors and owners often benefit from slow payments, while smaller subcontractors carry the cash crunch. Earlytrade’s bet is that agents can price urgency with brutal precision.

The Deets

- Construction is reported to be a $2.17T industry.

- Specialty subcontractors account for about $875B.

- Payment delays often stretch 60 to 90 days.

- Earlytrade’s agents will evaluate cash flow, timing and project-level needs.

- The model turns delayed payment into a real-time financing market.

Key takeaway

AI agents are moving into the unglamorous corners of business, where waiting for money is the whole business model.

🧩 Jargon Buster - Dynamic Discounting: A payment system where suppliers accept less money in exchange for getting paid sooner.

💰 Funding & Startups

Robots Get A $200M Industrial Glow-Up

Standard Bots raised $200M at a $1B valuation for AI-native industrial robots, according to AI Secret.

Why it matters

Industrial robotics is getting pulled into the AI-native era, where the software brain matters as much as the metal arm. Investors are betting that robots can become easier to train, deploy and adapt across factory tasks.

The Deets

- The company focuses on AI-native industrial robots.

- The news landed alongside broader momentum in AI agents and automation.

Key takeaway

The AI boom is leaving the browser and heading for the factory floor.

🧩 Jargon Buster - AI-Native Robot: A robot designed around AI decision-making from the start, rather than adding AI features later.

🔬 Research & Models

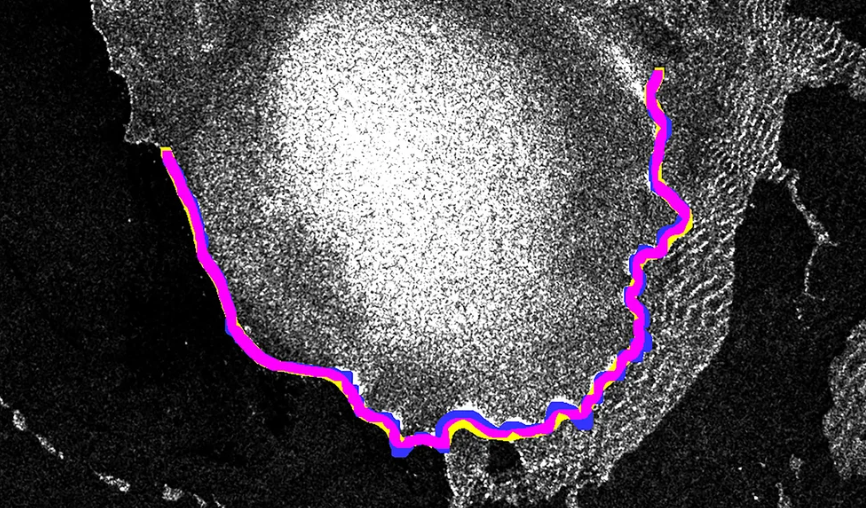

AI Gives Glaciers A Monthly Checkup

Researchers at Friedrich-Alexander University in Germany improved a glacier-tracking model so it could map calving fronts, the edges where icebergs break away, with far less human labeling. Using one hand-labeled image per glacier, summer reference images and bedrock maps, the system cut error from more than a kilometer to 68.7 meters, roughly human-labeling territory.

Why it matters

Climate monitoring has long been limited by human attention. There are too many glaciers and too few analysts tracing satellite images by hand. This system shifts glacier tracking from occasional snapshots to monthly monitoring across huge regions, giving researchers a much sharper view of how ice is changing.

The Deets

- The team mapped monthly fronts for 145 glaciers in Norway’s Svalbard archipelago.

- The work covered nine years of satellite imagery.

- The system produced more than 203,000 annotations.

- Researchers are now looking toward 1,500 more Arctic glaciers.

- The core breakthrough was scaling an existing approach with minimal new labels.

Key takeaway

AI did not make glaciers melt faster. It made the evidence harder to miss.

🧩 Jargon Buster - Calving Front: The edge of a glacier where chunks of ice break off into the ocean.

⚡ Quick Hits

- Google cut into the AI subscription price war, pressuring rivals to justify premium chatbot plans.

- Microsoft AI CEO Mustafa Suleyman criticized Anthropic for discussing Claude in ways that imply possible model consciousness.

- Apple is pushing back on Europe’s DMA rules as Siri AI and Apple Intelligence features remain limited there.

- AI coding tools have reached 97% adoption, but governance still lags.

- JPMorgan plans to deploy more powerful AI agents across the bank this year.

- New York became the first U.S. state to require ads to disclose AI-generated actors, with $1,000 fines and SAG-AFTRA support.

- OpenAI released interactive charts in ChatGPT, bringing in-line graphs directly into the chat flow.

- China is mapping out a $295B, five-year data center buildout with a heavy push toward domestic suppliers.

🧰 Tools Of The Day

- Dexter: An open-source financial research agent that can read earnings reports, interpret SEC filings and pull market data for stock research briefs.

- Codex: OpenAI’s coding tool showed up on a Japanese farm, helping build greenhouse automation, crop monitoring workflows and farm software.

- MyClaw: A cloud layer for OpenClaw that connects to Gmail, Slack, Google Docs, Sheets, Notion, GitHub, Telegram and WhatsApp.

- Gemini 3.5 Live Translate: Google’s real-time voice translation model supports 70+ languages while preserving tone and pacing.

- North Mini Code: Cohere’s first open-source agentic coding model.

- Kimi Work: Moonshot’s desktop agent that can run up to 300 parallel agents.

- LaunchDarkly AgentControl: A production-control tool for AI agents that lets teams adjust behavior without a full redeploy.

Today’s Sources: The Internet, AI Secret, The Rundown AI