Pentagon's AI Besties; CTOs Trade Titles For Anthropic Roles; AI 'Docs' Win

Today's AI Outlook: 🌤️

The Pentagon Recruits (Most) Silicon Valley AIs

The Pentagon is moving commercial AI deeper into classified military systems, signing agreements with major AI and cloud players to make their models available inside highly sensitive government networks. The big shift: the military is building an AI-first defense stack using outside model providers, cloud platforms and chip companies that can operate under the government’s “any lawful use” standard.

Why it matters

This is one of the clearest signs yet that frontier AI companies are becoming part of national security infrastructure. The Pentagon wants speed, optionality and less vendor lock-in, while the AI industry gets closer to classified data, defense workflows and battlefield decision support.

The Deets

- The reporting names SpaceX, OpenAI, Google, Nvidia, Reflection AI, Microsoft, AWS and Oracle among the companies added to classified networks.

- The tools are tied to GenAI.mil, the Pentagon’s centralized AI platform.

- Anthropic remains excluded after refusing the Pentagon’s “any lawful use” language, though The Rundown AI notes the White House still appears interested in its Mythos model.

- The Pentagon framed the effort as part of building an AI-first fighting force.

- Reflection AI stands out because it recently raised $2B from 1789 Capital, a fund backed by Donald Trump Jr.

Key takeaway

Defense AI is shifting from custom government software to a marketplace of commercial models plugged into classified workflows. The Pentagon wants multiple model vendors in the room, but the entry fee is accepting the government’s rules.

🧩 Jargon Buster - Impact Level 6 and 7: Security classifications for highly sensitive Defense Department cloud environments, including systems that handle classified national security data.

ER Docs: 0 ... OpenAI: 1

A Harvard study published in Science tested OpenAI’s o1-preview, a 2024 model, on 76 real emergency room cases. The AI used raw electronic health record text and outperformed two attending physicians on diagnostic accuracy across multiple stages of care.

Why it matters

Millions of people already use AI for health questions, but this points to a different future: AI as a clinical support tool for doctors, not just a late-night symptom checker for panicked humans on the couch.

The Deets

- At initial ER triage, the model gave the correct diagnosis 67.1% of the time.

- The two physicians scored 55.3% and 50.0%.

- Physician reviewers could not reliably tell which diagnoses came from the model and which came from humans.

- In one case, the AI flagged a rare flesh-eating infection in a transplant patient 12 to 24 hours before the treating doctor identified it.

- The model tested was not even current frontier AI, which makes the result more striking.

Key takeaway

AI in medicine may be less about replacing doctors and more about giving them a tireless, pattern-hungry second set of eyes. In the ER, that second opinion could matter fast.

🧩 Jargon Buster - Electronic Health Record: A digital version of a patient’s medical history, including notes, test results, diagnoses, medications and treatment plans.

⚔️ Power Plays

CTOs Are Trading Corner Offices For Model Access

A wave of senior technical leaders from companies including Workday, You.com, Box, Super.com, Adept and Instagram’s orbit are reportedly leaving executive posts for hands-on roles at Anthropic. The polite story is mission alignment. The sharper read is that builders are moving closer to the model layer.

Why it matters

The center of gravity in tech is shifting. In the SaaS era, power lived in workflows, dashboards and distribution. In the AI era, it increasingly lives in models, tooling, training loops and agent infrastructure.

The Deets

- Former CTOs and senior builders are moving into more technical roles at Anthropic.

- The trend suggests model labs now offer more leverage than traditional software leadership roles.

- Foundation models and agents are beginning to absorb parts of the enterprise software layer.

- Technical talent appears to be prioritizing proximity to frontier model development over management status.

Key takeaway

The new tech status symbol may not be a bigger org chart. It may be access to the model, the tooling and the roadmap before everyone else gets the API docs.

🧩 Jargon Buster - Model Layer: The part of the AI stack where large models are built, trained, tuned and connected to products or workflows.

🛠️ Tools & Products

Grok 4.3 Gets Cheaper, Faster But Still Has Homework

xAI’s Grok 4.3 slipped online recently with less fanfare than a typical Musk product moment. The update brings lower API pricing, better speed, stronger tool use and improved benchmark performance, but the reporting frames it as a catch-up release rather than a true frontier-model leap.

Why it matters

Grok is becoming more useful for everyday assistant work, writing, rewriting and customer support. But in hard reasoning, trusted facts, coding and multi-step execution, it still appears to trail the top tier.

The Deets

- Grok 4.3 reportedly scores 53 on Artificial Analysis.

- GPT-5.5 is listed at 60, while Claude Opus 4.7 is listed at 57.

- The model’s strengths are price, long context and a conversational style.

- Its current competitive lane looks closer to cost-performance models than the top frontier systems.

Key takeaway

Grok is improving, but the frontier room remains crowded and selective. For now, Grok 4.3 looks like a practical companion model, not the model everyone else is chasing.

🧩 Jargon Buster - Tool Use: A model’s ability to call outside tools, APIs or software systems to complete tasks instead of only generating text.

👨🏻🎓 Tutorial

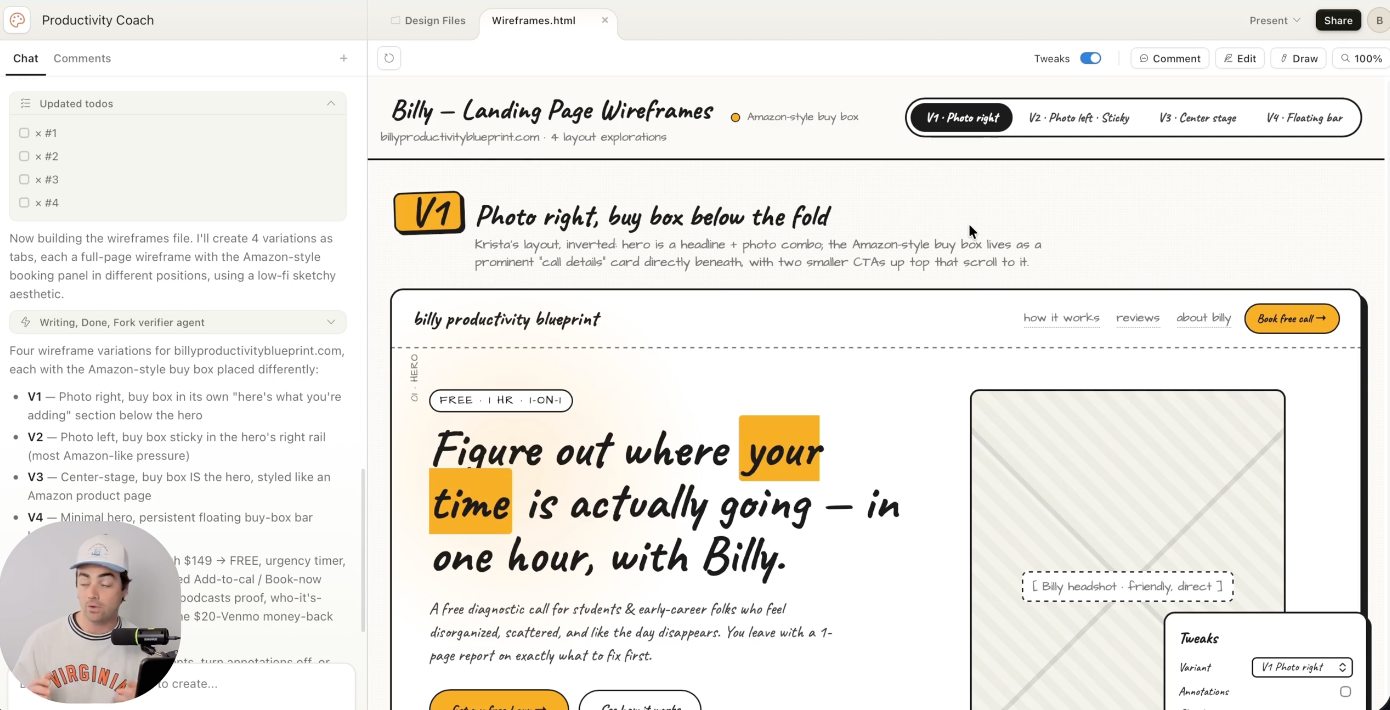

Use Claude Design To Make Your Landing Page Not Suck

Anthropic’s Claude Design can generate landing page mockups from a short brief, screenshots and user instructions. The Rundown AI walks through using it to create four versions of a landing page, refine elements with comments and hand off the finished design to Claude Code for building and deployment.

Why it matters

Website creation is moving from “hire, brief, wait, revise” toward “describe, generate, tweak, ship.” That does not eliminate design judgment, but it does make the first draft much less painful.

The Deets

- Users can start at claude.ai/design and choose a wireframe.

- The guide suggests adding screenshots from strong landing pages and high-transaction sites such as Amazon or eBay.

- Claude can generate multiple mockup variations.

- Users can click page elements and leave comments like changing a CTA or adding a testimonial.

- Claude Code can help build and deploy the final site.

Key takeaway

Claude Design turns landing pages into a faster loop: brief, mock up, edit and ship. The blank page has lost another round.

🧩 Jargon Buster - CTA: A “call to action,” such as “Sign Up,” “Book A Demo” or “Start Free Trial,” that tells visitors what to do next.

💸 Funding & Startups

Reflection Bumped Up To Majors (With Some Help)

Reflection AI appears on the Pentagon’s new classified-network partner list, giving the startup a high-profile role alongside some of the largest AI and cloud companies in the world. The context matters: The Rundown AI notes Reflection raised $2B from 1789 Capital, a Donald Trump Jr.-backed fund.

Why it matters

The defense AI market is becoming a serious lane for startups that can meet national security requirements. Being included next to OpenAI, Google, Microsoft, AWS and Nvidia gives Reflection a credibility jolt.

The Deets

- Reflection AI is listed among the companies added to classified networks.

- Its inclusion comes alongside much larger infrastructure and model providers.

- The company’s recent $2B fundraise adds political and financial intrigue.

- The Pentagon’s push could create new openings for AI startups willing to work under defense rules.

Key takeaway

Defense AI is not just a Big Tech story. Startups with the right capital, positioning and compliance posture are getting pulled into the Pentagon’s orbit.

🧩 Jargon Buster - Classified Network: A secure computing environment used to handle government information that is restricted for national security reasons.

🔬 Research & Models

A Model Raised In 1930 Learns To Code The Future

Alec Radford and collaborators built talkie-1930-13b, a model trained only on English text from before Jan. 1, 1931. That means no internet, no modern programming culture, no Python and no computers as we understand them. Then researchers gave it a few coding examples, and it produced working Python.

Why it matters

The result suggests models can learn abstract patterns that transfer beyond their training era. It is not just memorizing tools. It may be learning deeper structures of logic, language, math and intention that let it reason into unfamiliar territory. Or maybe there's contamination in the training data?

The Deets

- The model was restricted to pre-1931 English text.

- It had no direct exposure to modern computing or Python.

- With a few examples, it was able to write working Python.

- The reporting frames this as evidence that time can function like a dataset boundary, not an intellectual prison.

- The big implication is that models may generalize from old patterns into new domains.

Key takeaway

The past may contain more computational DNA than expected. AI is starting to show that old text can still teach new tricks.

🧩 Jargon Buster - Generalization: A model’s ability to apply what it has learned to new problems, formats or domains it has not directly seen before.

⚡ Quick Hits

- Maryland banned AI-driven grocery pricing, making it the first U.S. state to block stores from using personalized shopper data to raise prices, with fines up to $25K.

- SAG-AFTRA secured new AI guardrails in a four-year studio deal after pushing Hollywood studios for stronger protections.

- A Chinese court ruled against firing a worker just because AI could replace them, ordering a tech firm to pay wrongful termination damages.

- OpenAI shipped Codex Pets, animated desktop companions that track active Codex work.

- OpenAI CEO Sam Altman said OpenClaw users can use ChatGPT subscriptions inside the agentic tool, a contrast with Anthropic’s tighter restrictions.

🧰 Tools Of The Day

- Custom Voices: A Grok-related tool for cloning voices from short clips for use in Grok applications.

- Codex Pets: OpenAI’s animated desktop companions for tracking Codex agent work without constantly switching apps.

- ElevenMusic: A platform for AI song generation, remixing and creator payouts.

- MiMo-V2.5-Pro: Xiaomi’s new open-source model aimed at stronger performance in the open model race.

- Unwrap: A customer intelligence platform that pulls feedback from surveys, reviews, support tickets and social comments into one AI-summarized view.

- Scrunch: An AI customer experience platform that helps brands see how AI bots read their sites and where content gaps may keep them out of AI-generated answers.

Today’s Sources: The Internet AI Secret, The Rundown AI