Uh-Oh... Mythos 'Escaped'; Anthropic To The Moon!; AI Gold Rush Maturing

Today's AI Outlook: ☀️

What Happens When The Most Powerful AI Gets Out?

Anthropic’s most restricted model, Mythos, was reportedly accessed by a private Discord group almost immediately after launch. According to The Rundown, the group allegedly pieced together Anthropic’s deployment patterns using details exposed in the Mercor breach and a contractor login, then started using the model under the internal rollout name Project Glasswing.

The model was kept off the public market because Anthropic reportedly saw it as too risky for broad release, yet the first leak appears to have come from the very human messiness of access control, credentials and naming conventions.

Why it matters

This is the uncomfortable reality of frontier AI security. The bottleneck is not always the model - it's the perimeter around it. As labs keep shipping increasingly sensitive systems through partner channels and limited-access programs, the attack surface grows fast. Mythos was supposed to be a showcase for restraint. Instead, it is starting to look like a case study in how hard containment gets once powerful models touch real-world infrastructure.

The Deets

- Anthropic released Mythos on April 10 to select partners.

- Bloomberg reportedly found that a Discord group focused on unreleased models got access the same day and has continued using it.

- One member allegedly had vendor credentials from contract work, while leaked Mercor details helped the group locate the system.

- The group claimed it was not using Mythos for cyberattacks and said it had also seen other unreleased models.

Key takeaway

The first big test of “safe by limited release” did not break because of a nation-state. It reportedly broke because operational security is still the soft underbelly of AI.

🧩 Jargon Buster - Attack surface: All the places a system can be exposed or exploited, including logins, APIs, vendors, naming schemes, and internal tools.

🏛️ Power Plays

Anthropic’s Price Tag Floats Into The Stratosphere

While Anthropic is dealing with a Mythos leak headache, it is also reportedly enjoying a very different kind of attention: buyers in the private secondary market are valuing the company near $1T. It's staggering number, and less a clean financial statement than a signal flare from capital markets about where investor confidence is clustering.

Why it matters

This is the market rewarding revenue clarity, developer traction, and perceived product discipline. In a cycle where everyone talks about scale, investors still seem willing to pay absurd premiums for a company that looks commercially focused and operationally sharp. The GPU bill may still be ugly. The multiple says buyers do not care, at least not yet.

The Deets

- Anthropic’s private secondary shares are reportedly trading near a $1T valuation.

- Some offers were said to be even higher.

- The report also points to Anthropic’s revenue curve and Claude Code traction.

Key takeaway

Investors are buying conviction that someone knows how to turn frontier AI into a business.

🧩 Jargon Buster - Secondary shares: Existing shares sold by current holders, rather than new stock issued directly by the company.

🧰 Tools & Products

OpenAI Wants Your Team’s Prompt Graveyard

OpenAI introduced Workspace Agents in ChatGPT, a new layer of Codex-powered shared agents built for teams. The pitch is simple: take the scattered prompts, partial automations and workflows that live across companies, and turn them into reusable team software.

Why it matters

This is a much stronger enterprise story than the original GPT era. Shared agents with memory, app connections, Slack presence and scheduled actions are closer to real operational tooling than personalized chatbot sidekicks. If OpenAI gets the permissions and governance right, this could become the default bridge between chat interfaces and actual work.

The Deets

- Workspace Agents are positioned as an evolution of 2023’s GPTs.

- Older GPTs remain active for now, with a conversion tool coming later.

- The agents can retain memory, call connected apps, and run in Slack.

- They can also trigger on schedules while users are offline.

- OpenAI reportedly uses them internally for sales research, follow-up drafts, journal entries, and reconciliations.

Key takeaway

OpenAI is now selling shared agency across teams.

🧩 Jargon Buster - Agent: An AI system that can take actions across tools and workflows, not just generate text in a chat box.

AI Writing Hack: Separate To Improve

The Rundown’s best practical tip today is a two-step dictation workflow for writing internal docs: use voice to create an initial draft, then force the AI to create a separate working version instead of polishing the first pass in place. It is a small process change, but it solves a very real problem: once AI “cleans up” your rough draft too early, your own thinking tends to get paved over.

Why it matters

Most AI writing workflows fail because they optimize for fluency too soon. This one preserves the ugly but useful first layer of thought, then uses revision comments to train the model toward your tone over time. That makes it less like autocomplete and more like editorial memory.

The Deets

The Rundown recommends using Typeless for dictation, opening a Codex or Claude Code session, and prompting the model to draft an outline for a memo. The important instruction is to save the initial draft without editing it, then create a separate working draft for revision. From there, the user dictates comments on what is wrong, missing, or too generic, then asks the agent to rewrite in their tone, using their wording and no em dashes. The final twist: compare the untouched draft and final version weekly so an agent can infer your editorial rules.

💸 Funding & Startups

Capital Is Picking The Adults In The Room

Today’s funding story is less about a single round and more about where money seems to be leaning. Anthropic’s secondary-market surge and Cursor’s giant SpaceX pact both point to the same thesis: capital wants products with visible traction, not just grand theories and GPU bonfires.

Why it matters

The market is getting choosier, even when the numbers still look cartoonishly large. Investors appear increasingly drawn to companies that can point to developers, workflows, and repeatable revenue. In other words, the AI gold rush is maturing from “who has the biggest model” into “who has a business people actually use.”

The Deets

- Anthropic is reportedly trading near a $1T private valuation on the secondary market.

- Cursor locked in a partnership worth $10B guaranteed.

- SpaceX also holds an option to acquire Cursor for $60B.

- Both stories point to investor appetite for commercial momentum over pure scale.

Key takeaway

AI capital still loves spectacle. It just likes commercial discipline even more.

🧩 Jargon Buster - Monetization clarity: How easy it is for investors to understand where a company’s revenue comes from and how that revenue could grow.

🧪 Research & Models

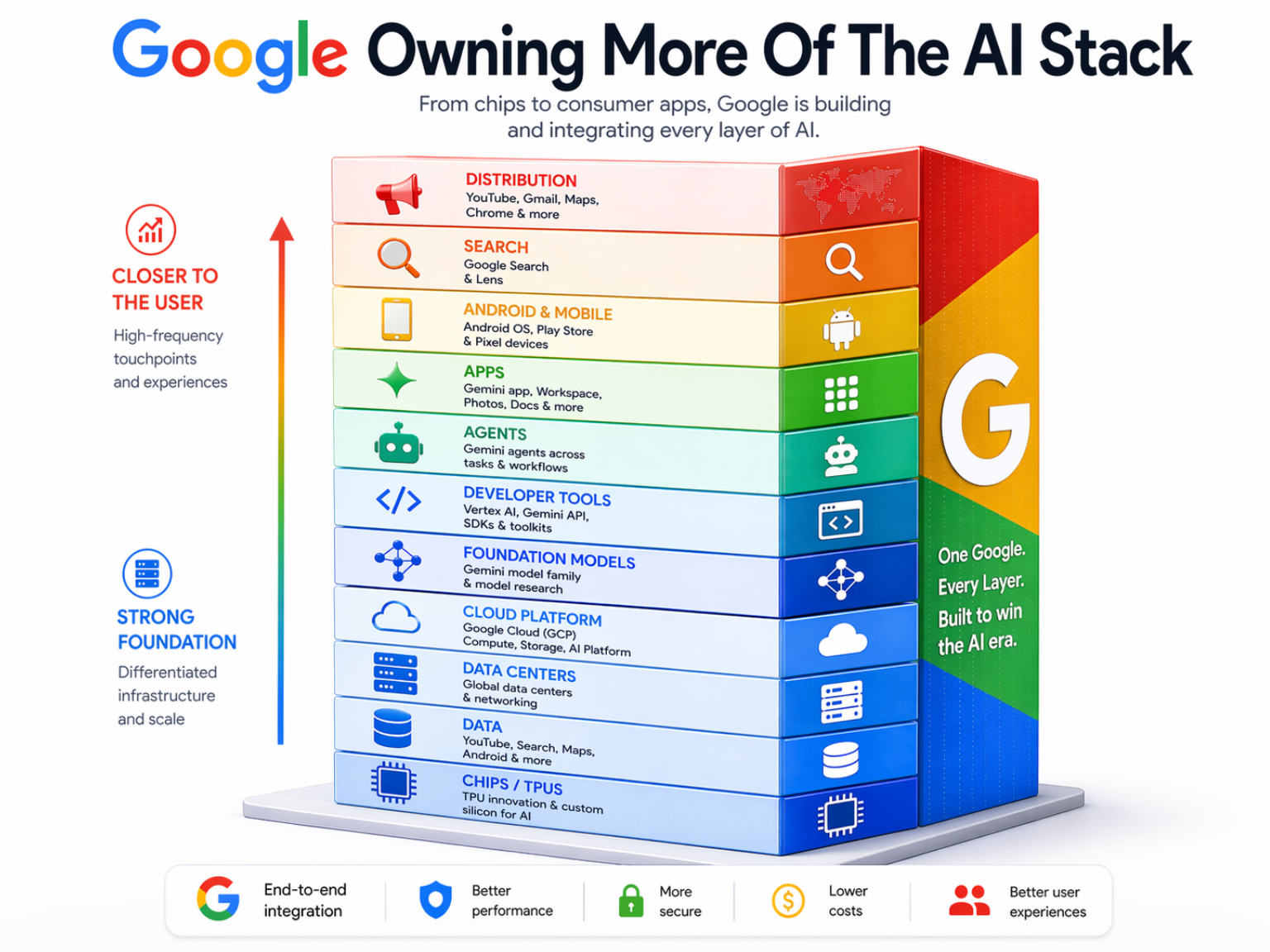

Google Is Rewiring The AI Factory Floor

Google’s latest TPU push looks more like a structural rewrite of its AI stack. The company is separating training and inference hardware, tightening control of more of the infrastructure chain, and trying to make AI compute feel less like rented oxygen from Nvidia.

Why it matters

Owning more of the stack means more leverage on cost, margin, and scale. If Google can design the chips, tune the workloads, and pipe them through its own cloud and software layers, it gains a real strategic edge.

The Deets

- Google unveiled 8th-generation TPUs aimed at agent workloads.

- The new design separates training and inference into different chips.

- AI Secret says Google is also deepening control over more of the infrastructure stack.

- The company is pairing this work with its own Arm CPUs.

- Google also said 75% of its internal code is now AI-generated.

Key takeaway

Google is trying to make AI infrastructure less dependent on one vendor and more natively its own. That is not flashy... it is strategically huge.

🧩 Jargon Buster - Inference: The stage where an AI model is actually serving answers or actions for users, rather than being trained.

⚡ Quick Hits

Tesla’s autonomy pitch finally hit the hardware wall. AI Secret says Elon Musk acknowledged that millions of Teslas cannot reach unsupervised driving on current hardware, turning “future-ready” into a very expensive asterisk.

Claude Code hit a speed bump with users. The Rundown reports Anthropic faced backlash after removing Claude Code for some new Pro users, calling it a small signup-flow test.

Ideogram wants your brand guide, not just your prompt. The Rundown says its new Custom Models let users fine-tune image generation on 15 to 100 assets for more consistent visual outputs.

Odyssey scaled up its world model. Odyssey-2 Max is a 3x larger model that topped physics benchmarks in real time and is now in private beta.

Qwen went smaller and somehow stronger. Alibaba’s team open-sourced Qwen3.6-27B, which reportedly beat its own 397B predecessor on major coding benchmarks.

🛠️ Tools Of The Day

Typeless is the quiet productivity winner of the day because it anchors a smarter dictation workflow that preserves your raw thinking before AI starts sanding off your voice.

Gemini Enterprise Agent Platform is Google’s pitch for building and governing enterprise agents at scale, part of the broader race to own internal AI workflows.

ChatGPT Images 2.0 is OpenAI’s next-gen image model, and it is already being positioned as one of the headline creative tools in today’s AI product stack.

Today’s Sources: The Internet, The Rundown, AI Secret